In such a case I would rather take a bit longer (even ten times longer) to get to the correct answer than rush in and get the wrong answer. Of course it is possible to compile DP emulation into the application so it will run on SP processors, but that really does lead to a considerable loss in performance (last time I used one of these compliers the loss in performance was more than a ten-fold increase in execution time over the same code running in SP only mode).ĭiscussions about speed advantages are fairly mute in applications where accuracy is vital - let's say one uses SP to calculate the orbital mechanics for an asteroid and that shows it will miss Earth by a safe margin, however, run the same code using DP one finds that on the same orbit the asteroid will collide with Earth. This means that they will fail to run on a processor that does not have either built-in DP, or some form of "DP emulation". Quite a number of compliers, or more specifically linkers, will compile part of an application using DP and other parts using SP, however such applications will indicate as requiring DP capability to run. But the most recent versions of the MacOS are not compatible with contemporary NVIDIA GPUs. FWIW, NVIDIA GPUs appear to be a much better bang for the buck than AMD GPUs when crunching Collatz Home tasks. So, I agree with the advice of one of the previous posters that you check the message boards of the projects for which you crunch. Finally, some projects may be better at utilizing AMD GPUs than NVIDIA GPUs and vice versa. And, of course, the performance of a particular GPU under MacOS may be different than under Windows or Linux. Some folks may achieve better numbers than me through undervoltage, overclocking, and better optimization of the crunching parameters. Here are Collatz Home performance data, generated using my Macs and optimized crunching parameters. The 3080 is on average 10-15% faster than the 2080Ti.

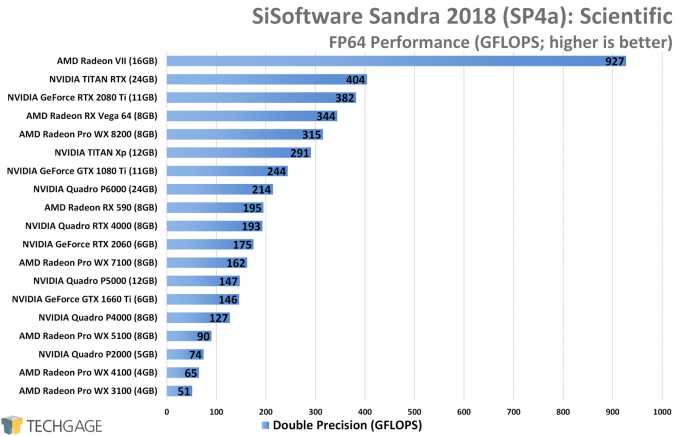

The $500 MSRP priced 3070 would perform very similar to the $1200 MSRP priced RTX 2080Ti. Give them 1 to 2 months, for prices to come down. So it'll be up to you, to decide what $$$ amount you want to spend in terms of initial purchase price, running cost, and what your case can handle in terms of heat.Īll RTX 3000 series GPUs (3090, 3080, 3070), are currently in high demand, and scalpers are trying to sell them for prices 2 to 3x MSRP. The 3070 is supposed to go for $500, but it'll be a while until it will reach this price. The 3080 can be pushed to run about as fast as it does at 250W or perhaps even lower wattages. I'm leaning towards the 3080, because it's faster, and has faster RAM, but also uses 325W, vs 225W on the 3070 (stock). If you're looking for the best bang for the buck, it'll be a toss-up between the $700 3080 and $500 3070. whatever-uple the project, until it's running at maximum performance. If they have too many cuda cores (most likely) you'll be able to double, triple, quadruple, octuple. To get the best, get the GPU with the most cuda cores. So far, the best bang for your buck, is not always the GPU that'll get you the best performance. I'm trying to work out if it's worth buying a more expensive and theoretically slower Nvidia card because it can run the better Cuda coding on some projects. Does anyone know which projects write more efficient Cuda code and how much of a difference it makes? On Einstein there seems to be very little difference. I normally consider which card to buy for Boinc based on the "theoretical floating point speed" quoted for the card in Techpowerup reviews, taking account of whether the project I want to run it on is single or double precision.īut I noticed on PrimeGrid that Cuda tasks are horrendously faster than they should be (by a factor of 2 by looking at other people's results - I only have AMD cards at present).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed